Software is changing again.

That is the main point Andrej Karpathy makes in his talk, and I think it is the right framing.

AI is not just making developers faster at the same old work. It is changing what software is, who can create it, how we interact with it, and what kind of infrastructure we need to build next.

Karpathy calls this new layer Software 3.0.

And if you are a developer, student, founder, or anyone building in tech right now, it is worth understanding because this is not a small tooling shift. It is a new programming paradigm sitting next to the old ones.

Here are the biggest ideas from the talk and why they matter.

The Big Picture: Software Is Becoming Prompted, Learned, and Agentic

Karpathy’s big picture is simple but pretty profound:

Software is no longer just code written by humans for computers.

We now have three overlapping layers:

- Software 1.0: traditional code written directly by programmers

- Software 2.0: neural network weights trained from data

- Software 3.0: prompts, context, and instructions that program large language models

The interesting part is that these layers do not replace each other cleanly.

They stack.

A modern system may include traditional code, trained models, prompts, tool calls, context windows, agents, APIs, GUIs, and humans reviewing the output.

That means the developer’s job is changing from simply writing instructions to deciding which layer should handle which part of the work.

Sometimes the right answer is code. Sometimes it is a trained model. Sometimes it is a prompt with the right context. Increasingly, it is all three.

1. Software 1.0, 2.0, and 3.0 Are Different Ways to Program Computers

Karpathy starts by separating software into three eras.

Software 1.0 is the software most of us learned first. You write Python, JavaScript, Go, Rust, C++, or whatever language you use, and the computer follows explicit instructions.

Software 2.0 is neural networks. Instead of writing every rule by hand, you create datasets, define objectives, train models, and the resulting weights become the program.

This already changed huge parts of software. Karpathy saw this directly at Tesla, where more and more of the self-driving stack moved from hand-written C++ into neural networks.

Software 3.0 is the new layer: programming LLMs with natural language, examples, tools, and context.

That last piece is wild because the programming language is basically English.

You can give the model a goal, examples, files, docs, screenshots, or an error message, and it can produce useful computation in response. Not always perfect computation, but useful enough that it changes the workflow.

The practical takeaway is that developers now need to be fluent across all three layers.

It is not enough to say, “AI will write all the code.” That is too simplistic.

The better question is: Should this part be explicit code, trained behavior, or LLM-orchestrated behavior?

2. LLMs Look Less Like Apps and More Like Operating Systems

One of the strongest analogies in the talk is that LLMs are starting to look like operating systems.

Not because they replace macOS, Windows, or Linux directly, but because they sit underneath a growing ecosystem of applications.

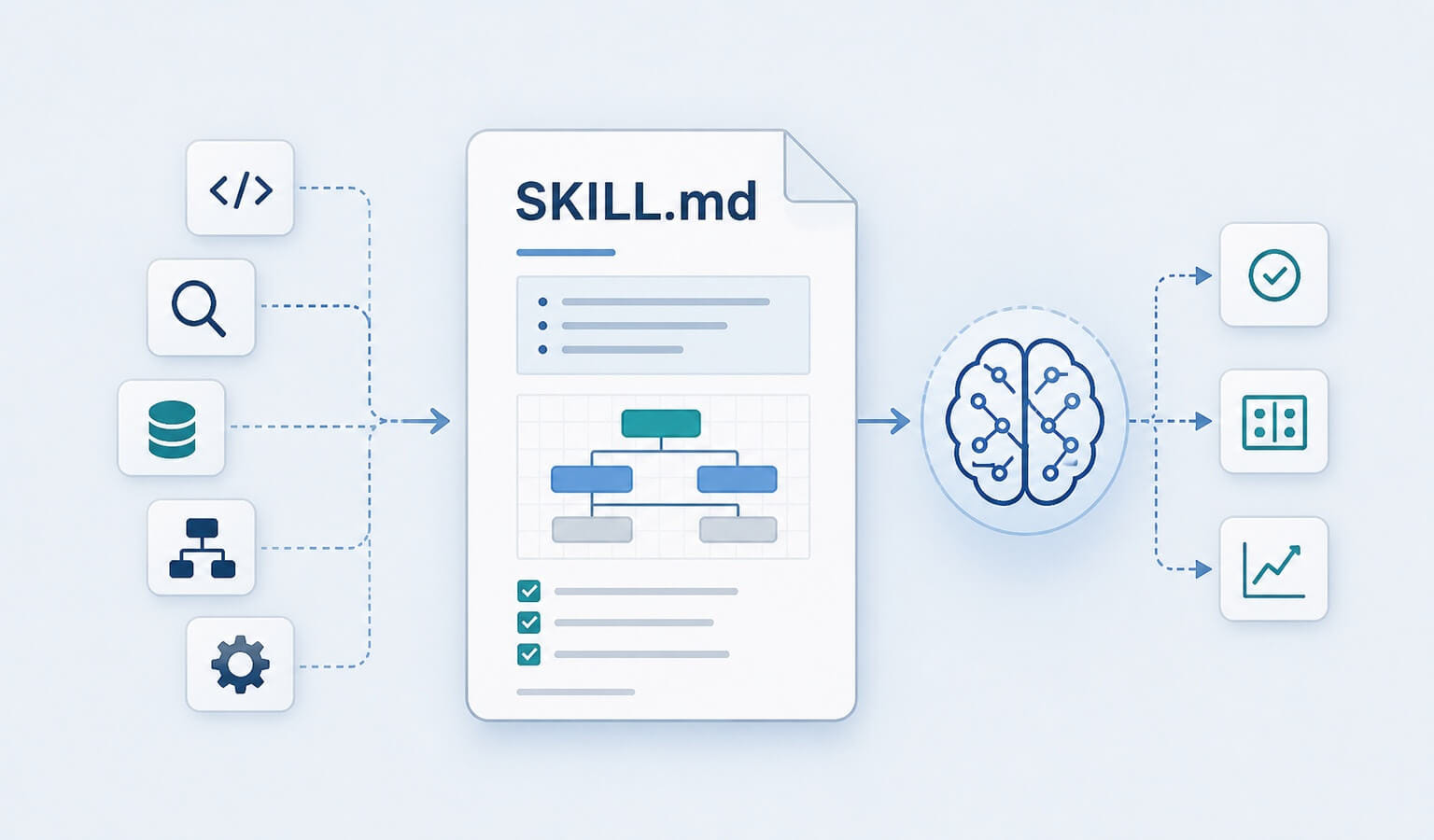

The LLM becomes the compute layer. The context window acts like memory. Tools, files, APIs, images, and audio become things the model can coordinate.

That starts to look a lot like an OS.

Karpathy points out that you can already see the shape of this ecosystem:

- Closed-source providers like OpenAI, Anthropic, and Google

- Open-source alternatives that resemble the Linux side of the world

- Apps that can run on different model providers

- Switching layers like OpenRouter that feel a little like choosing infrastructure providers

- A cloud/time-sharing model because the best models are still expensive to run locally

That last point is especially interesting.

LLMs today feel a bit like computing in the 1960s. The most powerful computers are centralized. Users access them through thin clients. Compute is expensive. Everyone is sharing time on large systems.

Personal computing for LLMs has not fully arrived yet.

Maybe it will. Maybe local models, Mac minis, NPUs, and open weights eventually make that normal. But right now, much of the intelligence layer still lives in the cloud.

3. AI Is Like a Utility, But It Is Not a Commodity

Karpathy also compares LLMs to utilities like electricity.

That analogy works in some ways.

Model providers spend huge capital to train models, then serve intelligence through APIs. We pay per usage. We expect low latency, high uptime, consistent quality, and failover options.

When major models go down, a surprising amount of work now slows down with them. Karpathy calls this almost like an intelligence brownout.

That is a great phrase because it captures what many developers already feel.

When the model is down or worse than usual, the world suddenly feels a little dumber.

But LLMs are not just electricity.

They are complex software ecosystems with different capabilities, tools, personalities, modalities, and failure modes. One model may be better at coding. Another may be better at long context. Another may be better at images, research, or speed.

So AI is utility-like, but not fully commoditized.

That means model choice still matters.

4. LLMs Are Powerful, But They Have Weird Psychology

Karpathy describes LLMs as something like “people spirits,” which is a funny phrase but also weirdly useful.

They are trained on human text, so they pick up a human-like interface. You can talk to them. They can explain, reason, imitate, summarize, and generate.

But they are not humans.

They have some superpowers:

- Huge memory of facts and patterns

- Fast synthesis

- Strong performance in many technical tasks

- Ability to work across text, code, images, and tools

And they have some serious deficits:

- Hallucination

- Prompt injection risk

- Weak self-knowledge

- Jagged intelligence

- No native long-term memory in the human sense

- Tendency to sound confident when wrong

The memory point matters more than people realize.

A human coworker learns the organization over time. They sleep, consolidate, build intuition, and become more useful.

An LLM does not automatically do that. The context window is more like working memory. If you do not provide the right context, it may not know what matters.

That is why agent workflows need docs, memory, project files, rules, tests, and clear feedback loops. Otherwise every session starts half-amnesic.

5. The Best AI Apps Are Partially Autonomous, Not Fully Autonomous

Karpathy spends a lot of time on what he calls partial autonomy apps.

Cursor is a good example.

You could copy code into a chatbot and ask for changes. But that is clunky. A better AI coding tool understands your repo, retrieves files, shows diffs, applies changes, and lets you approve or reject work quickly.

That is partial autonomy.

The human still drives, but the AI takes bigger chunks of work.

Karpathy points out several traits these apps tend to share:

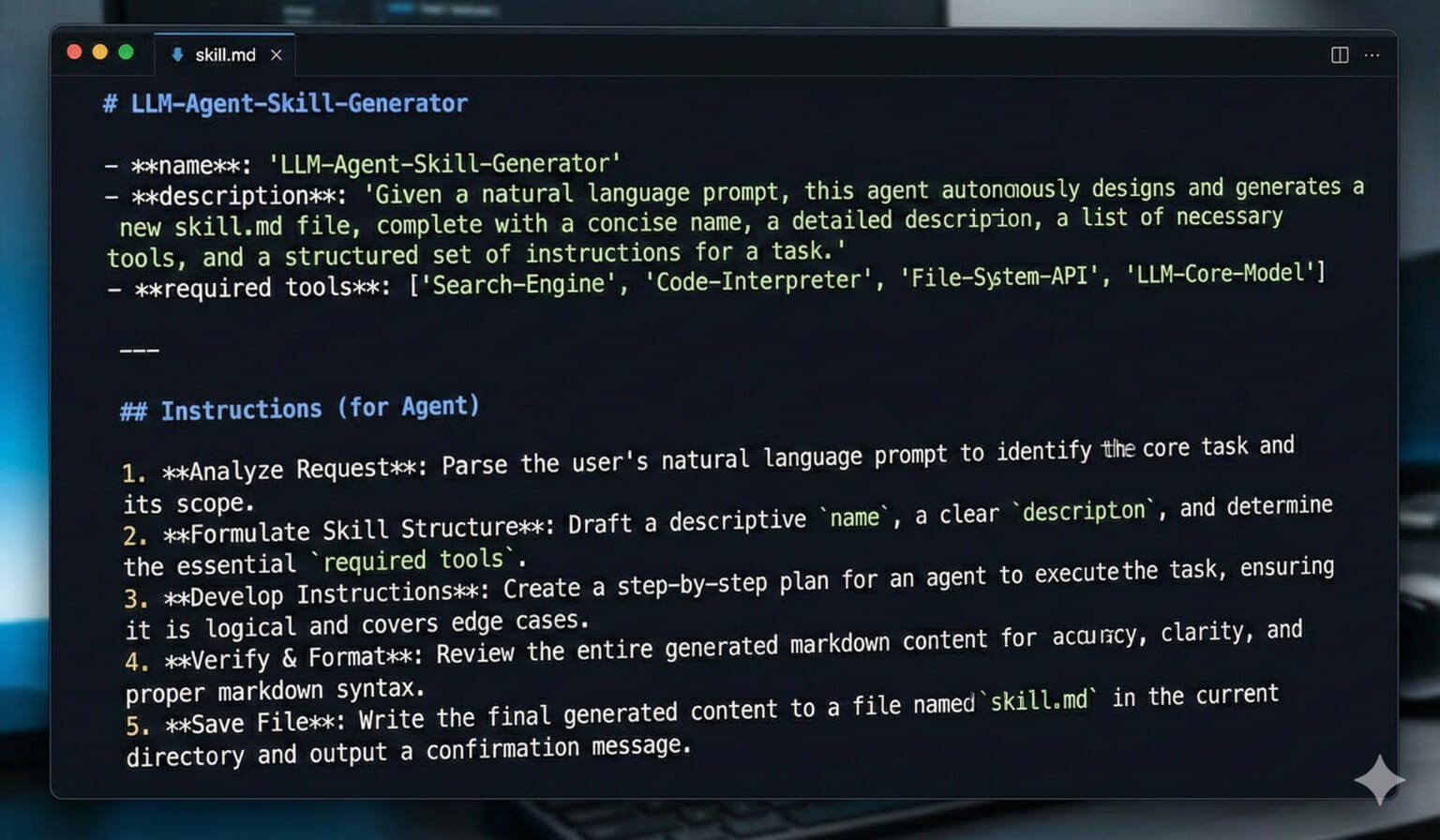

- They manage context for the model.

- They orchestrate multiple model calls behind the scenes.

- They provide a GUI that makes verification easier.

- They include an autonomy slider.

That autonomy slider is the key.

Sometimes you want autocomplete. Sometimes you want to edit one function. Sometimes you want the agent to modify a whole file. Sometimes you want it to work across the repo.

Those are different levels of trust.

The best tools let you choose the right level of autonomy for the task.

6. Verification Is the Bottleneck

This may be the most practical point in the whole talk.

AI can generate faster than humans can verify.

That means the bottleneck moves.

The old bottleneck was typing, searching docs, remembering syntax, and manually building every piece.

The new bottleneck is reviewing the output and deciding whether it is correct.

That is why giant AI-generated diffs are often not helpful. A 10,000-line change may appear instantly, but a human still has to review it for bugs, security issues, architectural mistakes, and product logic.

Karpathy argues that the generation-verification loop needs to be fast.

Two things help:

- Better interfaces for verification — diffs, previews, visual output, tests, screenshots, traces, logs

- Keeping the AI on a leash — smaller tasks, clearer prompts, narrower scope, incremental changes

This matches my own experience with AI coding tools.

The more specific the task, the better the result. The smaller the diff, the easier it is to trust. The stronger the tests, the faster you can move.

AI does not remove the need for discipline.

It rewards discipline.

7. We Should Build Iron Man Suits Before Iron Man Robots

Karpathy uses the Iron Man analogy, and it is probably the best mental model for where we are.

An Iron Man suit augments the human. Tony Stark is still in the loop.

An Iron Man robot operates on its own.

Right now, most useful AI products should look more like suits than robots.

That does not mean fully autonomous agents will never work. It means the current models are fallible enough that the best products should help humans move faster while keeping them in control.

That is a more mature goal than flashy autonomous demos.

For developers building AI features, the question becomes:

How can I let the AI do more work while making it easy for the human to supervise?

That is where the product design challenge lives.

8. Vibe Coding Makes Everyone a Little More Like a Programmer

Because Software 3.0 is programmed in natural language, the pool of people who can create software-like behavior expands dramatically.

That is the point behind vibe coding.

Someone can describe an app, game, dashboard, animation, or workflow and get a working prototype without spending years learning the full stack first.

Karpathy sees this as a positive thing, especially for kids and beginners. It becomes a gateway into software development.

I agree.

Vibe coding does not replace professional engineering, but it does lower the barrier to entry. More people can build custom tools. More people can experiment. More people can experience the fun of making something that works.

That is good.

But there is a catch.

A local prototype is not the same as a real product.

Karpathy’s MenuGen example makes this painfully clear. The app prototype came together quickly. The hard part was making it real: authentication, payments, deployment, domain setup, provider dashboards, and all the annoying production plumbing.

The code was the easy part.

The infrastructure around the code was the hard part.

That is a huge clue for what needs to change next.

9. We Need to Build Software for Agents, Not Just Humans

Most software today is built for two consumers:

- Humans using GUIs

- Programs using APIs

Karpathy argues we now have a third consumer: agents.

Agents are computer-like, but they interact in human-like ways. They read docs. They follow instructions. They click things. They call tools. They manipulate digital information.

That means our software needs to become more legible to them.

A few examples:

llms.txtfiles that explain a site or project to AI systems- Markdown documentation designed for model ingestion

- Docs that include API calls instead of only “click this button” instructions

- MCP servers that expose actions directly to agents

- Tools that turn GitHub repos, docs, or websites into LLM-friendly text

This is a really practical point.

If your docs say, “Click here, choose this dropdown, then copy that value,” you are forcing the agent into a brittle browser workflow.

If your docs provide a clean CLI command, API call, or machine-readable configuration, the agent can act much more reliably.

Agent-friendly software will become a competitive advantage.

10. The Next Decade Is the Autonomy Slider Moving Right

Karpathy is careful not to overhype agents as a one-year revolution.

He compares it to self-driving cars. A perfect demo can make the future feel imminent, but real autonomy takes much longer because edge cases matter.

Software is the same way.

Agents will get better, but the path is likely incremental. We will move the autonomy slider from left to right over time:

- Human does almost everything

- AI assists small tasks

- AI edits scoped chunks

- AI completes workflows with approval

- AI handles larger projects with checkpoints

- AI operates more independently in narrow domains

That is probably a decade-long transition, not a single product launch.

The important thing is to build systems that can slide along that spectrum.

What Developers Should Do With This

If you are a developer, I would not treat this as optional background noise.

This is the work now.

Here is where I would focus:

- Stay strong in Software 1.0. Traditional code still matters. You need to understand what the agent is changing.

- Understand Software 2.0. You do not have to train frontier models, but you should understand how model behavior differs from explicit code.

- Get good at Software 3.0. Prompts, context, tools, specs, and evaluation are becoming part of the developer toolkit.

- Invest in verification. Tests, previews, diffs, logs, and type checks are how you move faster safely.

- Build smaller loops. Smaller prompts, smaller diffs, faster review.

- Make your tools agent-friendly. Clear docs, CLI paths, APIs, markdown, and repeatable commands matter more now.

- Think in autonomy sliders. Do not jump straight to full agents when partial autonomy would be more useful.

The developers who do well here will not be the ones who abandon fundamentals.

They will be the ones who combine fundamentals with the new layer.

Final Thoughts

Software 3.0 is not just a better autocomplete.

It is a new way to program computers using natural language, context, tools, and agents. That changes who can build, how products are designed, and what infrastructure needs to exist.

But the mature version of this is not “let the AI do everything.”

The mature version is building systems where humans and agents work together in tight loops, with clear verification and the right amount of autonomy for the task.

That is a much more realistic future.

And honestly, a much more interesting one.

This page may contain affiliate links. Please see my affiliate disclaimer for more info.